From jaw-dropping weight loss transformations to viral ‘glow ups’, fitness and beauty influencers are hijacking our social feeds at an unprecedented pace.

But the reality behind the screen may be far more sinister.

That person giving you health advice might be a total digital fake — and millions of us are unknowingly falling for the trap.

One account in particular boasts a whopping 4.2 million likes, with one video even amassing over 25 million views.

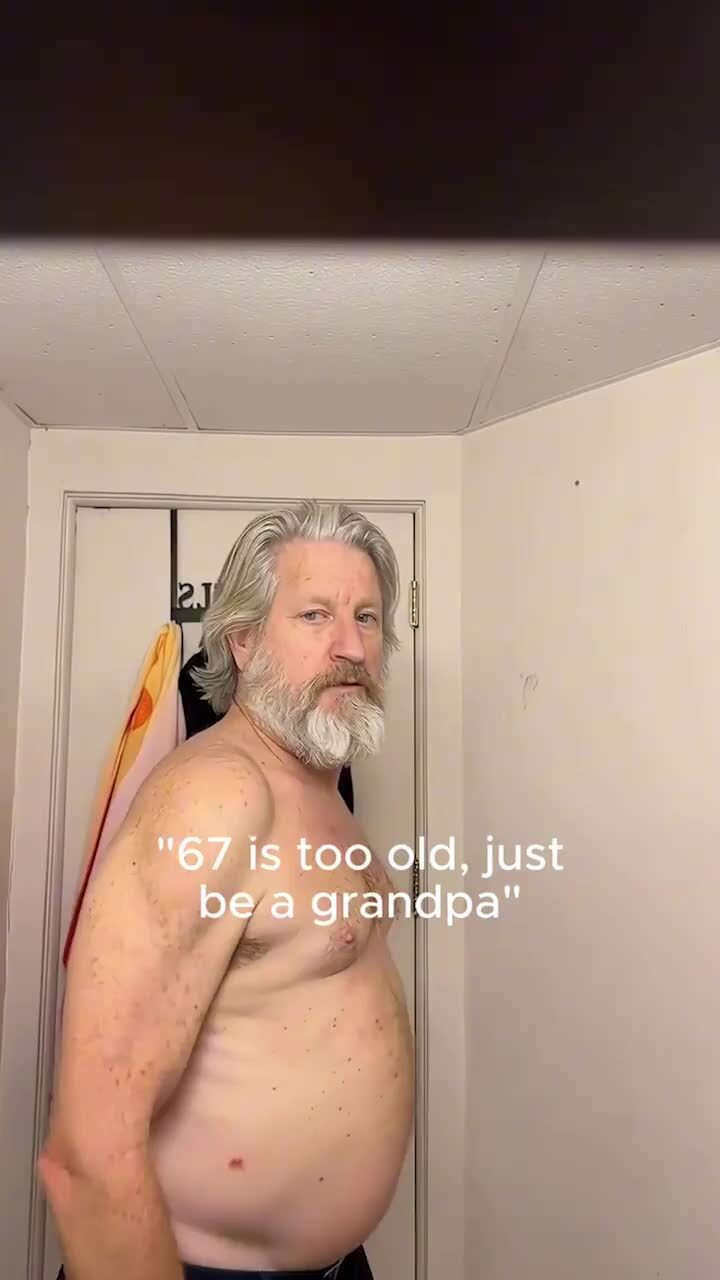

Going by the name Anderson Rhoe, the TikTok depicts a 67-year-old man undergoing a stark fitness transformation.

Hundreds of thousands of people have flooded the comment section in praise of the incredible results, yet experts believe it’s all entirely AI-generated.

“It is becoming much harder for the average person to tell what is real online,” Western Sydney University AI expert, Armin Alimardani, told news.com.au.

“In this case, I had to watch the account’s videos multiple times to spot any signs of AI, which shows how convincing this content has become.”

The account claims the eye-catching transformation is the result of using a fitness program called the 75Me App.

However, a spokesperson for the app denied any knowledge of the fake account.

“It’s not our account,” 75Me told news.com.au. “We just pay influencers to promote our app.”

News.com.au has also used computer vision techniques including texture analysis and contextual logic to review the account and determined it was fake.

Our in-house analysis tools check for a number of different signs of fake imagery, and detected the SynthID watermark within the visual components of this video, indicating the involvement of Google AI in its creation or significant modification.

It also determined multiple positive markers of AI generation, including a “notable and sudden shift in facial structure and muscle definition” in Anderson’s face throughout the video.

Mr Alimardi warns that the true threat goes far beyond just one viral TikTok page.

“The danger is not just that people may be tricked by a fake account,” Mr Alimardani explained.

“It is that hyper-realistic AI can industrialise deception in the health and fitness space, promoting unattainable standards and manipulating vulnerable consumers.

“What makes this especially troubling is that these industries already trade heavily on aspiration and insecurity.”

Fake photos or heavily edited content is nothing new, but the sheer scale and speed of emerging AI content is the concerning part.

Mr Alimardani believes that what makes this new era of AI-generated ads so unsettling is how cheap and easy it is to produce.

“With generative AI, it is possible to create highly realistic videos in hours or days at very low cost,” he said.

And the technology is only getting more advanced.

Mr Alimardani warns the next generation of AI advertising could generate custom videos tailored specifically to the person holding the phone.

“People may no longer just be shown an idealised stranger, they may be shown a customised, AI-generated version of who they could imagine themselves becoming,” he said,

Rise of AI-generated accounts

The account under the name ‘Anderson Rhoe’ is just one example of a massive, industry-wide proliferation of AI-generated accounts emerging online.

Late last year, we witnessed the emergence of a highly controversial “AI actress” going by the name Tilly Norwood.

First appearing in late 2025, the hyper-realistic ‘fake’ star amassed a massive following online, with her creators even releasing an AI-generated music video.

It triggered severe backlash from the entertainment community, with the SAG-AFTRA actors’ union publicly condemning the existential threat AI poses to human acting jobs.

Similarly, in 2023, a Barcelona-based agency sparked global controversy when they created a ‘fake’ pink-haired fitness model named Aitana Lopez.

Designed entirely by artificial intelligence, the account quickly became a commercial powerhouse for the agency.

The ‘perfect’ digital avatar boasts hundreds of thousands of followers, but after public backlash regarding transparency, its creators now disclose that the account is a “digital soul” in the bio.

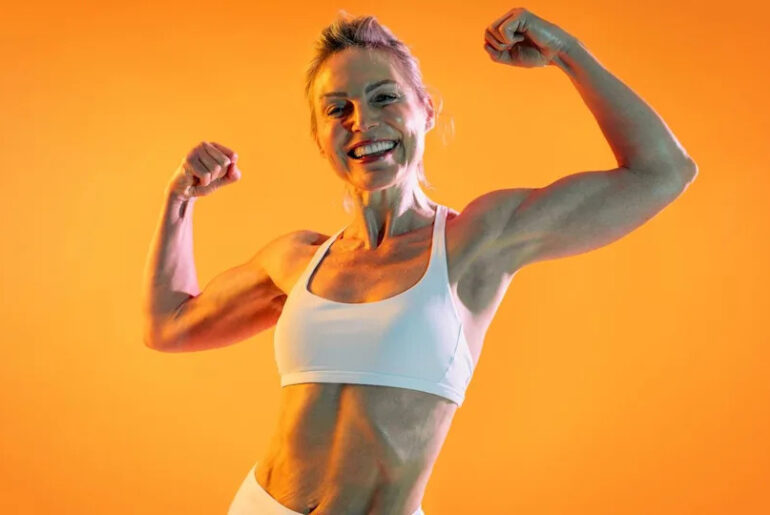

Can you decipher what’s real and what’s fake?

So, how can the average scroller figure out what’s real and what’s a bot?

For now, there are still a few visual clues you can spot if you look close enough.

When analysing the viral Anderson Rhoe account, Mr Alimardani pointed out the overly polished skin, which when combined with other “bizarre” glitches, can help the average scroller spot the red flags.

“The account showcases some good-quality AI-generated content, and by adding some ‘imperfect’ features like lighting and camera angles, they made it even more difficult to figure it out,” the expert said.

However, some other telltale slip-ups include distortions on hands and fingers, strange or eerily quiet backgrounds, text that doesn’t make sense, or lack of talking to camera, and if they do, it’ll likely have an echo.

“Just remember, if it looks too perfect, it’s probably AI-generated and should be treated like a scam,” the expert said.

He warns that these clues won’t last forever, as the technology is improving so rapidly that ordinary users soon won’t be able to tell what is real anymore.

The grey zone

When using a fake account to sell a real product, things get incredibly complicated.

Under the Australian Consumer Law, it is illegal for a business to engage in misleading or deceptive conduct.

Using AI to fake a 75-day body transformation to sell a fitness app is considered a violation of these rules.

But because Anderson Rhoe’s account appears to be international, it operates in a legal grey zone outside of our jurisdiction.

With the technology rapidly outpacing the law, Mr Alimardani believes that Australia urgently needs a stronger legislative framework to protect consumers.

“By the end of 2026, society will realise that nothing they see on the internet is trustworthy unless it is directly from a reliable source,” he warned.

He says we need strict new laws requiring clear disclosure on AI ads, ensuring creators, host platforms, and tech giants are responsible.

“Social media platforms should be more vigilant about what can be posted, and what report mechanisms are available to flag AI-generated content,” he said.

Ultimately, Mr Alimardani says the government must step up and educate the public that the internet is now “quite different from how they remembered it in 2024 and 2025”.

News.com.au reached out to TikTok for comment.