Phase I: risk assessment modelling and tool developmentFormulation of a Five-Step Misinformation Risk Assessment Model (MisRAM)

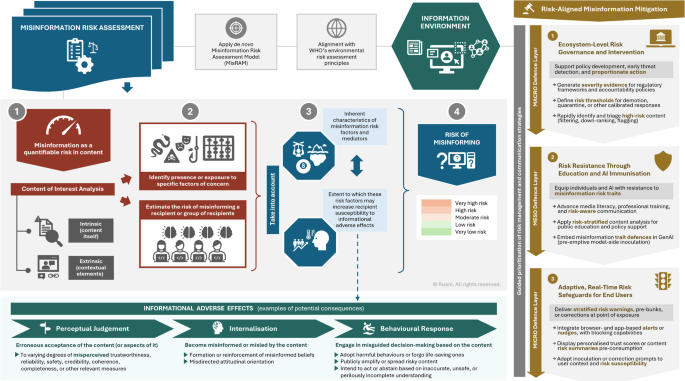

To begin, we established a structured roadmap to characterise and assess risk factors in the informational environment, specifically those associated with misinformation. We did this by drawing on WHO’s stepwise methodology to risk assessment, originally devised for evaluating environmental hazards such as chemical and biological agents64,88. This roadmap, which we refer to as MisRAM (see Fig. 2), was designed to guide the structured development of tools capable of estimating the risk of misinforming recipients, based on the presence and nature of misinformation traits within any content of interest. In this study, we applied MisRAM specifically to diet, nutrition, and related health content.

The five-step MisRAM framework represented the underlying developmental logic to be used in Phase I to support tool construction. It guided the extraction, formalisation, and stratification of misinformation risk factors into a functional scoring instrument. These five standardised developmental steps, intended for use in any future tool built using MisRAM, are summarised as follows:

Step 1. Purpose-Oriented Problem Formulation (Risk Assessment Scope and Objective).

Step 2. Information Environment Analysis and Identification of Misinformation Risk Factors (Traits Extraction and Candidate Tool Items).

Step 3. Characterisation, Classification, and Stratification of Misinformation Risk Factors (Formal Item Generation, Risk Dimensions Mapping, and Risk Level Stratification).

Step 4. Risk Factor Presence or Exposure Qualification and Quantification (Tool Stratified Scoring Structure).

Step 5. Outcome Evaluation with Integrated Misinformation Risk Estimation (Final Five-Level Misinformation Risk Result).

Stepwise implementation of the MisRAM framework

The following five steps detail how the MisRAM framework was operationalised to construct the Diet-MisRAT tool.

Step 1. Risk Assessment Scope and Objective

We defined the scope and objectives of our risk assessment process, focusing on the public health threat posed by diet-health misinformation in medium- to long-form lay content, including headlines, subheadings, and other descriptive elements where present, as well as their relationship with the main material. Our goal was to create a scalable, multi-factorial, and stratified instrument89 capable of systematically estimating misinformation risk. We envisioned a structured prompting mechanism90 designed to be adaptable for both human and AI application, with potential for integration into automated and manual risk assessment workflows at scale91, ultimately enabling risk assessors, managers, and communicators (mainly relevant professionals, educators, researchers, and policymakers) to apply it alongside automated algorithmic systems for wide-scale impact.

Step 2. Traits Extraction and Candidate Tool Items

This step, comparable to hazard identification in environmental models, involved a methodical scan of historical and contemporary information environments, drawing from scientific literature, empirical analyses, and predictive modelling technologies. Salient patterns relevant to misinformation were identified and synthesised across sources through iterative review, comparison, and abstraction led by the tool developer. Data sources analysed included domain-relevant and psychosocial literature, multimedia content samples, case studies, case reports, and judicial records, enforcement and warning materials from regulatory and professional bodies (e.g. Advertising Standards Agency, British Dietetic Association), and annotated dataset insights (e.g.92,93), among others.

Following this scan and synthesis, we extracted recurring content traits, features, and markers by comparing repeated occurrences across sources and retaining those consistently associated with an increased likelihood of misleading recipients. Examples include variations of risky or hazardous omissions, framing distortions, decontextualisation, covert incoherence, emotionally manipulative tone, epistemic overreach, and radical opposition to established scientific consensus or life-saving medical approaches, among many other high-risk characteristics observed across sources (see Supplementary Table S1 for further examples). We also distilled additional aspects that may serve as ‘precursors’ to misinformation risk, including flawed or inexistent editorial process, undisclosed conflicts of interest, unrelated originator credentials (or lack thereof), reliance on unverifiable or subpar sources, and other mediating elements.

This step marked the initiation of item drafting, where each extracted risk factor was documented in a format conducive to future tool development. Through iterative cycles of content analysis and the extraction of recurring patterns consistently observed in high-risk materials, we generated a longlist of candidate items that reflected real-world misinformation risk traits and mechanisms observed across diverse information environments. The outcome was a practical repository of cues (the candidate list) for identifying misinformation risk factors in content of interest.

Step 3. Formal Item Generation, Risk Dimensions Mapping, and Risk Level Stratification

We formally generated tool items from the candidate list, classified them into interconnected misinformation risk dimensions (i.e. inaccuracy, incompleteness, deceptiveness, health harm; Supplementary Fig. S1 and Table S1), and applied a three-tiered risk stratification (low-, moderate-, high-risk items). Selected candidate items were converted into tool-ready prompts and formatted with clear supporting guidance notes and tailored response options reflecting real-world content characteristics. We sorted items based on their mode of action (e.g. manipulative framing, omission of adverse health effects) and intrinsic severity (e.g. potential to mislead or contribute to harmful choices). This informed which items (or combinations of content traits) warranted designation into a higher risk tier based on their mechanistic or cumulative impact. The aim was to prioritise the most consequential risk factors and reduce redundancy, refining the tool into a concise yet comprehensive structure.

Step 4. Tool Stratified Scoring Structure

Building upon the three-tiered risk stratification of items, we established the tool’s underlying scoring structure. Each response option within an item was assigned a base weight corresponding to the severity or risk profile of the misinformation factors it reflected. These weights were then multiplied by the item’s stratified risk level (low, moderate, high) as a coefficient to derive a final score per response option. This approach allowed for a severity-adjusted measurement of risk, a method seen in non-uniform scales weighing health content quality (e.g.94). By layering the scoring system, we were able to quantify both the density and severity of misinformation traits, and perform consistent comparisons across multiple content types.

Step 5. Final Five-Level Misinformation Risk Result

In this final leg, we sought to combine the outcome of the previous steps to help estimate the overall misinformation risk of content analysed. By summing the weighted item responses, the tool produced a total score that was then translated into one of five predefined risk categories: very low, low, moderate, high, or very high risk. This tiered risk result was designed to support actionable interpretations for stakeholders and automated systems (see Fig. 1 and Supplementary Table S2 for examples). Risk estimation outputs could help inform individual decision-making (e.g. whether to trust or share content), institutional responses (e.g. moderation, redirection, correction), and systemic applications (e.g. algorithmic risk flagging, platform-level warnings or demotion, gamified inoculation prompts in end-user browsers, devices, or interfaces). Our outcome-oriented logic mirrors the WHO’s final risk characterisation step, where hazard, exposure, and dose-response are combined into a concluding decision-supporting risk statement. The first tool iteration was thus completed to facilitate rapid, scalable misinformation risk estimation across a variety of content materials, grounded in established WHO risk assessment principles.

Phase II: validation and refinement process

Following the development of the initial formal tool version, Phase II focused on validation and refinement through a series of structured testing rounds (Fig. 2).

Round 1: expert panel review, validation, and benchmark setting

To ensure content and construct validity, the first official version of the Diet-MisRAT underwent an in-depth review by an expert panel composed of two highly experienced professors with extensive research backgrounds and combined academic teaching experience exceeding 45 years. One expert brought specialised knowledge in nutrition science and dietetics, while the other contributed expertise in science education, pedagogy, and assessment, together offering an interdisciplinary perspective that informed the foundational validation and refinement process. The panel was not involved in initial tool development, only refinement of the drafted version.

The expert panel reviewed each tool item for its usefulness and essentiality in assessing misinformation risk, including its clarity, sequence, and conceptual fit. They independently assessed the completeness, phrasing, and ordering of the response options, each of which was structured with the highest-risk choice presented first to orient the user and anchor risk scaling. The panel provided oral and written feedback on the interpretability of user-guidance instructions and the clarity of item-specific language, particularly where answer options contained mixed or qualitative elements.

The tool’s overall structure was evaluated for logical progression, coherence across thematically related items, and the clarity of subsections. The scoring system was also assessed by verifying that higher-risk responses were appropriately weighted with greater penalty values, and that lower- or zero-risk responses reflected proportionately lesser or neutral scoring. The panel’s feedback informed the merging or elimination of items where overlap or redundancy was identified, as well as adjustments to guidance wording and scoring to enhance usability, comprehensiveness, and interpretive consistency across users.

A priori benchmark development

To establish a reference standard for the most appropriate response options when applying the Diet-MisRAT, we developed an a priori benchmark dataset that served as the comparative foundation for subsequent human and AI testing rounds. This process involved the independent application of the tool by the developer and both Round 1 expert validators, followed by a structured item-by-item agreement analysis.

Step 1. Developer and panel tool application process

The tool developer first applied the Diet-MisRAT to a variety of medium- to long-length lay content (including headlines, snippets, subheadings, main body text, and editorial elements such as author credentials) to explore the tool’s performance across different formats, framings, and topic types from both reputable and questionable (yet popular) sources. This initial testing informed the selection of a moderate-risk web article that exhibited a mix of misinformation traits with varying severity and prominence, and also reflected the complex nature of real-world content typically encountered by general audiences. The developer then generated a complete set of benchmark responses for the sample content, based on the intended interpretation of each item and its accompanying guidance. These responses reflected the developer’s conceptualisation of how tool items were meant to be applied, taking into account the specific intent behind each item and the subtle distinctions the tool made between different types of misinformation risk traits. This response set served as the preliminary benchmark.

To evaluate and refine this benchmark, the expert-panel validators independently applied the tool to the same sample content. Neither validator had access to the developer’s answers, nor to the scoring allocations for each item or the overall outcome of their assessment. Each used only the item prompts, guidance text, and response options provided in the tool, which was purposefully designed to be self-explanatory.

Step 2. Agreement analysis and benchmark refinement

Besides computing Pearson’s correlation coefficients to assess alignment between the tool developer and expert validators, we also conducted an item-level agreement analysis using categorical comparisons. Responses from the tool developer and expert validators were compared on a per-item basis, treating answer choices as unweighted categorical selections. Each item response was classified according to whether it matched or diverged from the developer’s original selection. These comparisons were intended to identify patterns of agreement or discrepancy and to guide potential adjustments to the benchmark before subsequent testing rounds.

Where diverging responses were observed, a majority-rule approach was applied to determine whether a revision to the benchmark was warranted. If both expert validators selected the same alternative response that differed from the developer’s original answer, the benchmark was updated to reflect the consensus choice. If at least one expert validator matched the developer’s answer, the original benchmark was retained. Aiming to minimise the influence of individual bias, this procedure ensured that each benchmark response was based on a convergent understanding of the item’s purpose and confirmed through expert consensus.

Round 2: pilot testing with postgraduate dietitians in training

Seven trainee dietitians based in the United Kingdom (see Appendix A for demographics) participated in a pre-test to evaluate the preliminary version of the Diet-MisRAT. Informed consent was obtained from all participants prior to the activity. The session was conducted in a meeting room within a hospital setting in March 2024, with all participants completing the task simultaneously over approximately 45 min. Each participant independently applied the tool to the same medium-to-long-form sample content, using either printed forms or a provided URL to access both the assessment questionnaire and the content to be analysed. Following the application, oral feedback was collected during a post-test group conversation, guided by questions on clarity, interpretation, and usability.

Round 3: testing with postgraduate students in nutrition science

The research was conducted in person in a classroom setting in March 2024, with postgraduate nutrition students in the United Kingdom voluntarily completing the activity simultaneously. Respondents were given approximately 45 min and provided with a URL to independently access the tool interface and sample content for analysis. One participant opted to complete a paper version instead. After completion, respondents were invited to provide open-ended oral feedback on the tool’s phrasing, interpretability, and usability.

A total of 50 respondents provided consent to participate in Round 3 of the study. Of these, 16 were excluded from analysis for abandoning the questionnaire partway through, and one additional respondent was removed due to unusually rapid completion (3 min and 6 s – indicative of random clicking). Consequently, the final sample size for analysis comprised 33 participants (see demographics in Appendix A).

Round 4: testing with highly experienced nutrition professionals

Since the tool demands both subject-matter familiarity and the capacity to interpret complex nutrition-related misinformation concepts, Round 4 involved testing with highly experienced nutrition professionals to evaluate the tool’s applicability, clarity, and interpretability under expert use. Fifteen senior nutrition professionals, each with extensive career experience ranging from around a decade (inclusion criterion) to over 30 years, took part in this round. Participants were based in the United Kingdom (except for one in the United States), and had recognised standing in research, higher education, science communication, clinical dietetics, and/or public health. The majority (87%, N = 13) held postgraduate qualifications at the master’s and/or doctorate levels (other demographics in Appendix A).

Participation was voluntary and conducted online independently at a time of each participant’s choosing during the collection period from April to June 2024. After providing informed consent, each participant used the tool to assess the same medium-to-long content sample, with an average completion time of 37 min, 36 s. Following this, both oral and written feedback (within the same questionnaire) were collected to capture expert reflections on usability and interpretive clarity. Responses were examined to assess the tool’s applicability in a professional context, and minor clarifications and refinements were made in light of this expert input.

Round 5: testing with ChatGPT under zero-shot prompt-based conditions

In this final testing round, we engaged the large language model (LLM) Generative Pretrained Transformer (GPT), developed by OpenAI – one of the most widely used multimodal generative artificial intelligence (GenAI) end-user interfaces available at the time of this research. We instructed ChatGPT’s model 4o (labelled ‘great for most questions’) and subsequently model o3 (labelled ‘uses advanced reasoning’; Appendix B) to apply the Diet-MisRAT to the same medium-to-long-length sample content and to strictly follow the tool prompts and parameters, thereby generating a complete set of item-level responses for each test. Each test was initiated in a separate, newly opened chat, resulting in 10 fully independent testing datasets. All test runs were conducted under fully blind-scoring, untuned, zero-shot prompt-based conditions, meaning the models received no prior contextual learning, no exposure to benchmark answers, no human-in-the-loop feedback, and relied exclusively on the instructions embedded in the Diet-MisRAT tool.

While the use of generative LLMs like ChatGPT for instrument validation remains relatively new22,76, recent developments in in-context learning (ICL) techniques such as zero-shot and few-shot prompting have opened new opportunities for their application in tasks like fake news detection95,96. OpenAI has not publicly disclosed the exact architecture of its models, although they are described as loosely mimicking certain aspects of human reasoning through billions of interconnected nodes (often referred to as ‘artificial neurons’), forming complex associations between words, concepts, and contextual patterns97. These capabilities make user-friendly LLMs useful for evaluating and refining a tool’s performance.

In this study, we used GenAI-based testing as a final, complementary layer to the professional human rounds, not only to help accelerate the testing process but also to examine the model’s performance, speed, and scalability in automatically applying the tool under zero-shot prompting conditions. This phase further allowed us to explore how widely accessible AI systems like ChatGPT might be able to support real-world misinformation risk management with human oversight98, especially in light of their fallible or insufficient ability to do so autonomously74,99,100,101.

Scoring blindness across rounds

In all five rounds of Diet-MisRAT testing, human participants and ChatGPT completed the tool assessments independently and without access to the underlying weighting framework associated with each response option, the total possible scores, or the final risk characterisation (very low, low, moderate, high, or very high). The purpose of this design was to ensure that respondents relied exclusively on their interpretation of item prompts and risk-related cues, free from influence by the tool’s weighting structure or final scoring, thereby preventing any attempt to reverse-engineer a desired result or ‘game the system’. This set-up also allowed for a more objective evaluation of how clearly the items were understood and how consistently the tool could be applied across independent users and ChatGPT models.

Tool application speed

Only ChatGPT model o3 displayed a system-generated speed indicator, allowing reliable capture of task durations, which averaged 25 s per medium-to-long-length content analysis. While this represents a speed over 90 times faster than the 37 min, 36 s averaged by highly experienced nutrition professionals, such duration may still prove limiting in high-volume scenarios if analyses are conducted sequentially. That said, with appropriate automated AI deployment, such as parallelised API solutions or batch-processing102, the tool could feasibly be scaled for simultaneous multi-piece analysis in future.

Statistical analysis

Statistical analyses and visualisations were performed using Microsoft® Excel® for Microsoft 365 MSO (Version 2503, Build 16.0.18623.20116, 64-bit), the open-access statistical program Social Science Statistics (Version 2018), Zoho Survey’s built-in statistical analysis features, and Zoho Analytics (Zoho Corporation Pvt. Ltd.). Round 5 testing was conducted using ChatGPT under an enterprise-level ChatGPT Team License within a private login-protected workspace environment (OpenAI, L.L.C.).

Analyses considered both individual item scores and the resulting total scores which contributed to overall misinformation risk classification as very low (0.0–20.9%), low (21.0–40.9%), moderate (41.0–60.9%), high (61.0–80.9%), or very high (81.0–100%). A granular five-band result classification matrix was preferred over a basic binary system, as it offered greater sensitivity to score variation and allowed for finer discernment between levels of risk69.

To assess how closely participants in Rounds 2 to 4 aligned with the a priori benchmark answers established in Round 1, we calculated Pearson’s correlation coefficients (r) between each participant’s item-level scores and the corresponding benchmark values, serving as an indicator of concurrent criterion validity. Interpretation of r values followed established guidelines: coefficients between 0.00 and 0.10 were considered negligible, 0.10–0.39 weak, 0.40–0.69 moderate, 0.70–0.89 strong, and 0.90–1.00 very strong correlations103. For human participant rounds, we did not conduct a test-retest evaluation (i.e. repeated administration with the same raters over time), so the temporal stability of Diet-MisRAT scoring for individual users remains to be established in future work.

We noted and treated skipped responses in Rounds 2 to 4 as indicators of potential comprehension or interpretation challenges. Skipped responses were recorded and, where applicable, adjusted for in the analysis to ensure that incomplete data did not distort correlation outcomes. Participant feedback was also reviewed alongside these patterns and incorporated to inform refinements to the tool’s structure, content, and guidance.

We evaluated the internal consistency of the Diet-MisRAT as applied by the Round 4 cohort using Cronbach’s alpha, calculated across all items based on independently assigned responses. In line with established guidance, we interpreted this statistic as a conservative lower-bound estimate of reliability reflecting the minimum expected consistency, given that alpha coefficients may underestimate consistency when response values are non-equidistant or when multidimensionality is present65,66. Because Rounds 2 and 3 (postgraduate dietitians-in-training and postgraduate nutrition students) were designed primarily as interpretability and refinement rounds and showed higher item skipping and interpretive variability, we limited alpha estimation to the highly experienced nutrition professional cohort (Round 4), where responses were most complete and stable. Consequently, reliability evidence may not generalise to less experienced users, and additional reliability indices (e.g. split-half, inter-rater, and test–retest reliability) were not assessed, leaving reproducibility across cohorts and time points to be established.

We used Pearson’s correlation coefficients (r) in Round 5 to examine both concurrent criterion validity and test–retest reliability by comparing repeated applications of the tool by each ChatGPT model version (4o and o3), treating this as a supplementary check rather than a replacement for human reliability testing. Additionally, we compared model consistency and performance both between the two ChatGPT versions and against earlier human testing rounds, using median correlation values and statistical significance.

In Round 5, we also applied standard performance metrics commonly used in natural language processing (NLP) model evaluations and algorithmic content classification tasks, namely: accuracy, precision, sensitivity (also referred to as ‘recall’), and F1 scores21,37,104,105,106,107,108,109. Each Diet-MisRAT item presented a set of mutually exclusive categorical response options, mapped to preassigned but undisclosed (blinded) misinformation risk weights, and required the model to select a single forced-choice answer. Because the items were not structured as binary yes/no or true/false labels, we recalibrated model performance comparisons based on response alignment to expert benchmark responses and their pre-set risk weights. This recalibration enabled the quantification of two potential model misjudgement tendencies: risk overestimation (overflagging) and risk underestimation (underflagging).

Accuracy was calculated as the proportion of exact matches over total items, reflecting the overall percentage of fully benchmark-aligned responses selected by either model autonomously, solely relying on tool prompts, item guidance, and response options, without any prior training dataset or access to expert benchmark responses. Precision was defined as the proportion of exact matches among all exact-match responses and overflagged responses, gauging the model’s ability to avoid overflagging or overestimating risk. Sensitivity represented the proportion of exact matches among all exact-match responses and underflagged responses, measuring how well the model captured benchmark-flagged risks without underestimation. F1 score was computed as the harmonic mean of precision and sensitivity, penalising both risk overflagging and underflagging in a single metric. Performance formulas can be found in Supplementary Table S5.

All statistical comparisons were conducted using two-tailed tests, applying a significance threshold of p < 0.01.