Dr. Maurice Sholas has a beautiful, challenging calling — he cares for very sick children. He takes on the saddest of cases, and works with families so kids with spina bifida or traumatic injuries can still “win” at life. For some, that means gaining the ability to visit the bathroom independently.

But lately, Sholas has been put in a no-win situation by artificial intelligence. His likeness was used to create a deepfake video hawking supplements — specifically targeting Black consumers. Try as he might, he still hasn’t been able to remove all the various videos that have landed on places like TikTok and Twitter.

So instead of caring for very sick children, the Harvard-educated New Orleans doctor now spends time fighting AI and learning about intellectual property law.

“What’s frustrating is that it costs money, time, effort, and relationships to protect something that should be intrinsically mine, ” he told me during our interview for The Perfect Scam podcast I host for AARP.

There’s been a lot of talk about the problem of Deepfake videos and politics — how activists might change an election by, quite literally, putting words into a leader’s mouth. I believe consumers have become relatively sophisticated at spotting the more outlandish fakes — President Trump wearing Pope garments, for example. On the other hand, fake ads — especially those involving less popular figures — can be harder to discern. And they might ultimately cause more damage.

Sholas told me he knows of at least one person who bought the supplements based on the fake videos. After telling his story on local television, a victim reached out.

Scholas is not identified in the video; his appearance is altered slightly, and a fake voice is dubbed onto it. But his lab coat nametag is visible.

There is very little a victim can do to get fake content removed from the Internet. Sholas first reached out to the account that posted the videos, which ultimately blocked him. The very tool used to abuse his identity was now being used to prevent him from defending himself. Initially, he says, social media companies ignored his complaints. Later, after the local story aired, some services took action, but by then, copies of the video had spread across multiple services. He consulted a lawyer and was redirected to a PR company.

“They said the best thing you could do is hire a PR firm basically to go out there and do a sweep of the internet and push positive content to counteract whatever misinformation is there,” he said. That kind of search engine optimization could cost up to $20,000, he was told. Instead, he has taken to posting a series of self-made content.

“When someone borrows, to use a kind word, or steals, to use a real word, it puts me at risk, it puts my medical license at risk, and it puts my livelihood at risk. And to protect all of that, there’s nothing I can do as a small guy but spend more money,” he said.

Fake video is far more pervasive on social media than most people realize, says Frank McKenna, chief fraud strategist of a company called Point Predictive. He’s also the author of the popular Frank on Fraud newsletter.

“I see these all over TikTok, all over Instagram, all over Facebook. They’re inundating people’s news feeds; the social media platforms I don’t think are doing enough to kind of control the problem,” he told me.

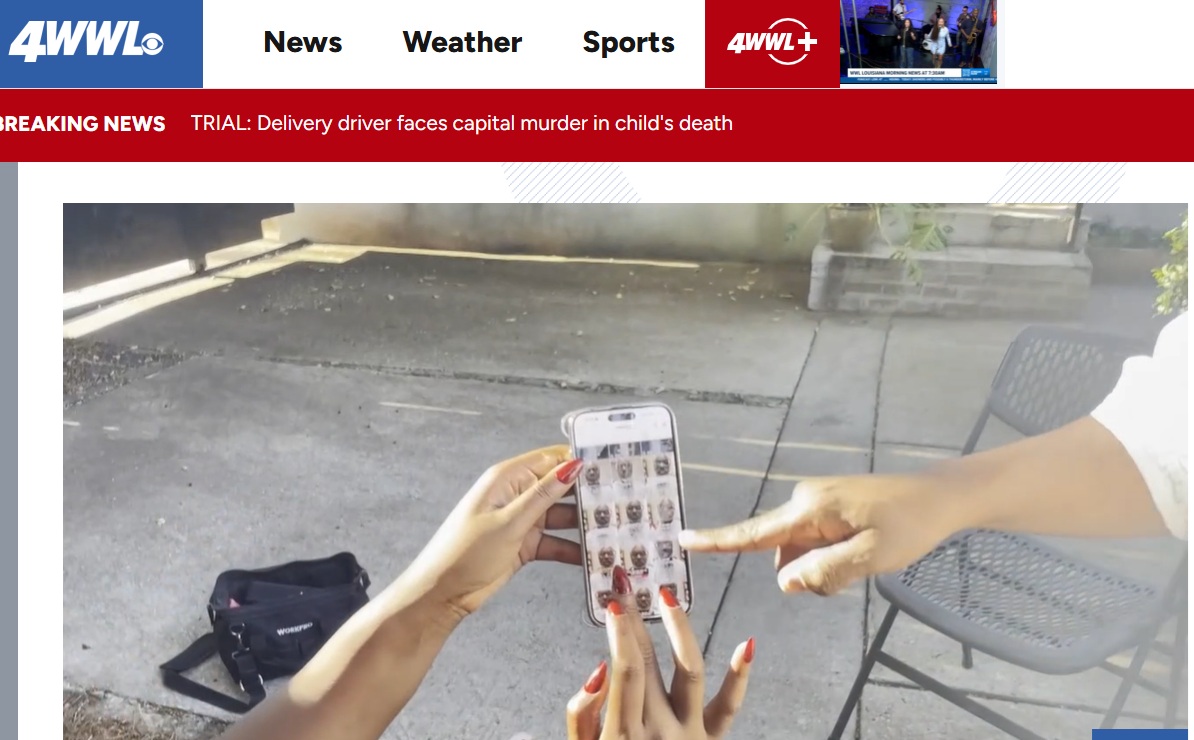

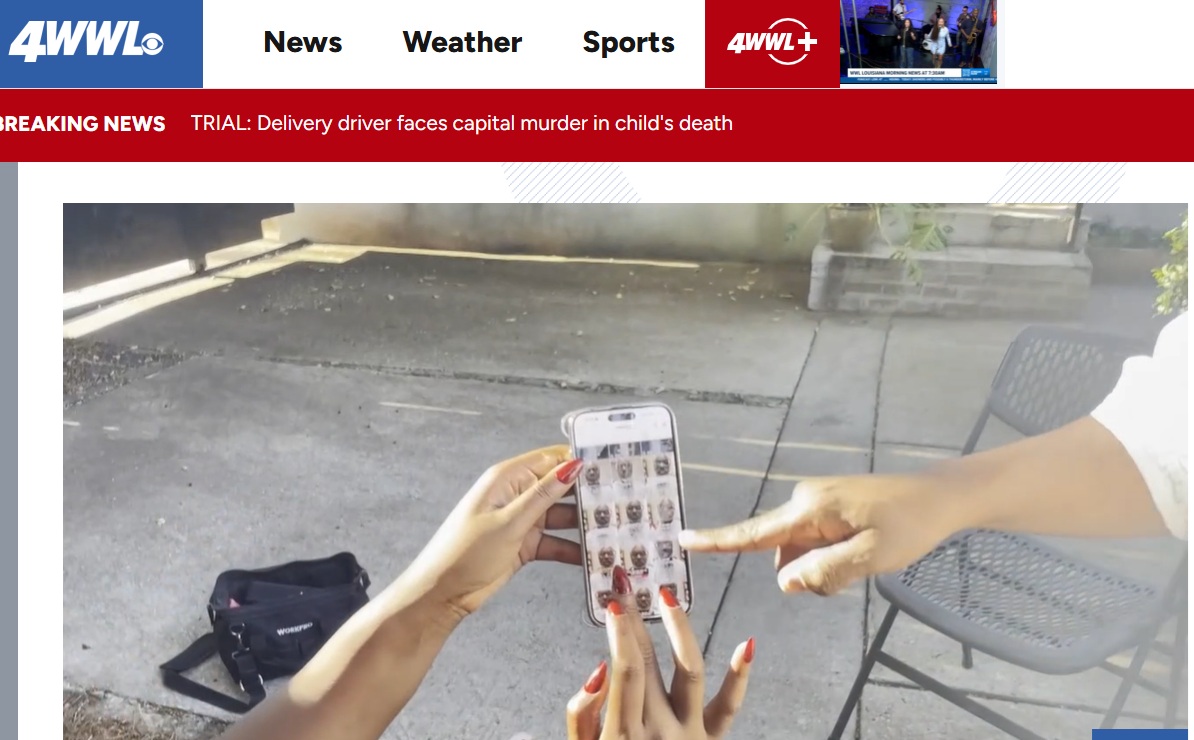

Dr. Maurice Sholas shows a reporter the deepfake videos he found. (WLTV.com)

Dr. Maurice Sholas shows a reporter the deepfake videos he found. (WLTV.com)

NBC’s Al Roker was actually the victim of a similar deepfake attack about a year ago. You can watch his interiew about it at this link.

“I think people probably don’t realize how many deep fakes they’re seeing as they scroll through social media. From my experience, it’s at least half the videos that you’re seeing ….there’s some element of AI generation in those videos. And that’s only going to get worse,” he said. “The case will be that most of the content you’re looking at online is AI-assisted in some way … So people are going to have to get accustomed to the fact that they’re going to have to question pretty much everything. … These other celebrity deep fakes, I think, are going to surprise a lot of people, because they’re becoming more and more common.”

How hard is it to make fake videos like the ones that use Sholas’ likeness? Not hard at all, McKenna says.

“Using information off of YouTube videos, Instagram videos, or Facebook videos that you post, the criminals and scammers can take that content and put those into AI generating videos, and make you say anything that they want,” he said. “So just a few seconds of video can create these…they call them AI avatars, and they can basically make you sell vitamins or make you sell crypto investments and things like that. So it’s not hard at all, anybody can do it and a lot of scammers are.”

And, perhaps the most alarming part of this dark new trend — consumers are over-confident in their ability to spot fakes.

“The thing about AI deep fakes is 60 percent of the population thinks they can spot them, but in reality, I think a study … found that only .1% of people can actually identify those deep fakes,” he said.

I hope you’ll listen to the full interviews I conducted with Sholas and McKenna at this recent episode of The Perfect Scam. But if podcasts aren’t your thing, here’s a partial transcript.

———————Partial Transcript—————————

[00:04:09] Dr. Maurice Sholas: So a friend of mine sent me a link on TikTok, and said, “Is this you?” And so I’m, I was relatively new to TikTok at the time; I’m trying to be the old guy that stays current on all the new apps (laughs) and I clicked on the video and it was a likeness of me hawking vitamins, K2D3, which is something I’ve never done, and something I’ve never recorded. And I was just stunned to see what looked like me and words coming out of my mouth. It wasn’t me. Now the voice didn’t sound like me, but it definitely looked like my face.

[00:04:44] Clip: I asked, do you want me to give you the safe answer or the truth? He said, the truth.

[00:04:51] Bob: That’s the sound from a TikTok video of someone who looks like Maurice hawking a vitamin saying things Maurice has never said. Seeing this is shocking for Maurice. It takes a moment, but pretty quickly the doctor figures out where the germ of that video came from.

[00:05:09] Dr. Maurice Sholas: It was a background that was pulled from a story I told, one of my most popular videos where I talked about how one of my patient’s brothers hit the panic button on my name badge, and the security busted into my visit thinking I was in trouble when I was really just being hugged and kissed to death by a, a 3-year-old. (laughs)

[00:05:29] Bob: The authentic video is purely charming. This fake video is alarming, and there are more like it. Soon Dr. Maurice, he calls himself Doc MoSho, finds himself a host of similar videos all over TikTok. Criminals have taken a small segment of one of his videos and created a host of fakes designed to sell this supplement. Why was he targeted? Maurice thinks he knows exactly why. The ads targeted Black Americans.

[00:05:59] Dr. Maurice Sholas: Yeah, we are closer to 16, 18% of the population. I live in New Orleans, which is a 60% black city, so there are pockets of Black Americans that are vital members of communities all over, and to establish trust, people know that when your doctor shares the same representation of you, it helps with that trust. So they picked me specifically to talk about that product, to talk to the population they wanted to buy supplements.

[00:06:23] Bob: And supplements that were allegedly essential because they’re Black, right?

[00:06:29] Dr. Maurice Sholas: Correct, supplements that were allegedly essential because they’re Black, when, you know, there’s no data showing those supplements are more or less essential to Black people, there’s no data showing that these supplements are coated in a way that makes melanated skin process them better than if they were given to non-melanated people. It just was a flim flam that used my reputation to sell a product.

[00:06:51] Bob: The flim flam that used his reputation to sell a product. All those years, decades, making very sick children and their families trust him, abused, violated on bogus supplement ads.

[00:07:06] Dr. Maurice Sholas: It felt like the 20 years I’ve spent in medical practice, all of the work I did to become a first generation doctor and get an MD PhD from Harvard University and to really break my way into a specialty that most people had never heard of before, they heard of it from me, was just taken without my permission to do something I would never do or say.

[00:07:29] Bob: Is there some kind of mistake? The voice isn’t his. But when he zooms in on the video, his name is clearly on the lab coat, so he reaches out to whoever posted the video.

[00:07:43] Dr. Maurice Sholas: I tried to respond to the video, and, and I was like, hey, this isn’t me.

00:07:49] Bob: Silence. He gets no response. But he does get a reaction.

[00:07:55] Dr. Maurice Sholas: What they did was they didn’t take it down, they added age lines to my mouth and added like some little sets to look like some of my teeth were missing or different, so they could say it’s not you anymore, ’cause it’s older, or they don’t have the same, I have good teeth, I’m proud of my teeth. But they could say it wasn’t you. But they didn’t take it down, they just tried to alter the digital image to make it so they could pretend like it wasn’t me or utilizing my likeness.

[00:08:20] Bob: So it sounds, you’re saying you complained, first you complained directly to the creator ho…

[00:08:26] Dr. Maurice Sholas: Yes.

[00:08:26] Bob: …then made this crazy adjustment, right?

[00:08:29] Dr. Maurice Sholas: Yes.

[00:08:30] Bob: And then he escalates the complaint.

[00:08:34] Dr. Maurice Sholas: Uh, so I reported it to TikTok and TikTok basically didn’t do anything. I guess I don’t have enough followers and I’m enough of a nobody on there that they didn’t respond. I don’t have a PR firm. So TikTok just ignored me.

[00:08:49] Bob: Frantic, Maurice turns to journalism for help to WWL-TV, that’s where we got the audio for the fake doctor.

[00:08:58] Dr. Maurice Sholas: I actually have a good friend that’s a news anchor here, I reached out to her and said have you ever heard of this, what’s going on. She ended up talking to her producers. They sent a reporter out to talk to me and that reporter reached out on my behalf. As soon as the reporter reached out, hours later the account that was offensive was banned and taken down.

[00:09:18] Bob: But that is hardly the end of the story. For one thing, Maurice quickly learns there’s already been real-life consequences.

[00:09:26] Dr. Maurice Sholas: When I actually, the story ran here in New Orleans on TV, my friend’s husband that saw the story on TV and said, “Oh gosh, I actually bought that because I thought it was you.”

[00:09:37] Bob: (chuckles)

[00:09:39] Dr. Maurice Sholas: And I was like, bud, that wasn’t me! He said, “I’m sorry, I didn’t know.” I was like, it didn’t link to me or any of my accounts. It, so why would I be saying something about a product I never use in real life, you’ve never heard me talk about in real life, and it didn’t link to any of my real-life accounts?

[00:09:57] Bob: And at this point Maurice learns his trouble is only beginning. Those TikTok videos were removed.

[00:10:04] Dr. Maurice Sholas: By that point, the material that spread from TikTok to Instagram and also to X, when I tried to reach out to Instagram to get them to remove this TikTok generated content, again, just ignored me. I made my account verified to see if I could get more traction, and it was just literally like whack-a-mole; as soon as you get one thing, a video would pop up somewhere else.

[00:10:27] Bob: With, but this was with Instagram. Are, you actually paid for the verification process?

[00:10:31] Dr. Maurice Sholas: I did. I paid for the verification process on the advice of my attorney because they said, if you’re verified it gives you more rights and privileges, so I feel like I had to pay for the privilege of being me.

[00:10:41] Bob: Oh my God. And then it didn’t work anyway, right?

[00:10:44] Dr. Maurice Sholas: It didn’t work anyway. They were just, sorry, bud. Kick rocks. Just wait for it to blow over.

[00:10:50] Bob: Meanwhile, the problem keeps getting worse.

[00:10:53] Dr. Maurice Sholas: There were more videos that they used my likeness or something similar to like, my likeness. Again, I’m bald and I have a gray beard. They tried to make my beard darker; they tried to take in and take out of some of my teeth. They added some frown lines to my forehead and my cheeks to make me look a little older. I am 55, but I’m proud that I’ve got good skin and not too many wrinkles, so they got plausible deniability. And so every time I would say that’s me and I never said that, they eventually, the creator blocked me so I couldn’t see it anymore or any of these things so I had to have another person say, is it down yet? (laughs)

[00:11:31] Bob: Okay…

[00:11:31] Dr. Maurice Sholas: So it’s something to be blocked from yourself.

[00:11:34] Bob: (laughs) This is such like a Kafkaesque odyssey you’re describing to me. That’s, first of all, like we’re running past, it’s unnerving to have someone make a video of you that’s an older you that you’ve got to look at. That’s weird, right?

[00:11:48] Dr. Maurice Sholas: Yeah.

[00:11:49] Bob: Yeah, and that’s the smallest offense that I’m hearing of all these things, but then this could still be going on and now the tools of this tech company are being used so that you can’t even defend yourself, you’re protected from seeing it.

[00:12:01] Dr. Maurice Sholas: Correct.

[00:12:02] Bob: That’s awful.

[00:12:03] Dr. Maurice Sholas: Correct. They, so what they started doing is they started systematically blocking me on all the platforms that I was complaining on. So I couldn’t see whether or not the information was down.

00:12:13] Bob: Maurice keeps talking to his lawyer, keeps looking for some kind of solution. The answers aren’t comforting.

[00:12:21] Dr. Maurice Sholas: And when I talked to the lawyers, what became clear to me is that this is a way that technology really outstrips or outpaces the rules. We had a law that actually was passed last year in Louisiana’s legislative session, but it was vetoed by our governor, that would have given us some sort of redress for people misappropriating and misusing our likenesses. That’s important here in Louisiana because we have a film industry that’s based here, so you can imagine if you’re Meryl Streep and somebody doesn’t want to pay your rate, but they just use you to do what they need to do — boom — they can get a lot of traction. But that law was shut down and there’s really nothing at the federal level that we can lean to. I was talking to my attorney and I was like, what can I do? And she said, “Send me, now that we know somebody bought it, we can actually see who that actual distributor/manufacturer is.

[00:13:10] Bob: Ah-ha.

[00:13:10] Dr. Maurice Sholas: Because the other part about this, Bob, is that even if you could figure out what rule of law applied to this, which I told you was already dicey, then you have a jurisdiction issue. Because what happens is the product manufacturer typically hires a separate company to do the marketing. So the product manufacturer can say, I didn’t really misuse you, that was the marketing company. And the marketing company can say, I didn’t misrepresent you, because the product company told me it was cool. So it’s like fingers pointing back and forth and none of this matters if those companies are not based in the US.

[00:13:44] Bob: And boy, are you getting an education in all this very quickly.

[00:13:47] Dr. Maurice Sholas: Yes, I never thought I would want to understand the ins and outs of intellectual property rights and how they apply to an individual and their likeness. I’m not an actor or a famous person, but I’ve learned way more about this than I thought. So in talking with the attorney, they brought in a crisis management attorney…

Like this:

Like Loading…